Introduction

Project Overview

Technical Implementation

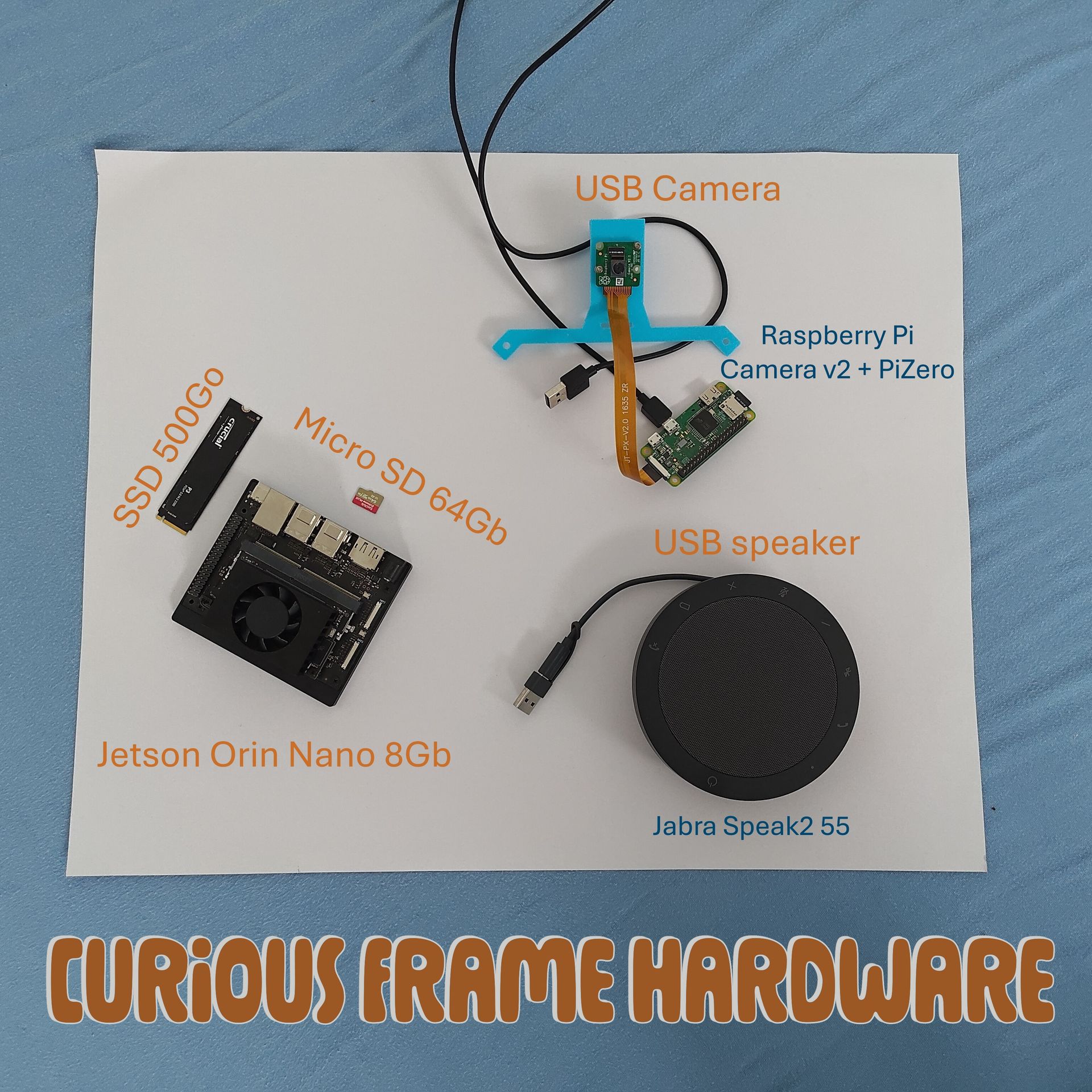

- Image Capture: A Raspberry Pi camera is used to capture images of objects.

- Edge Computing Platform: The NVIDIA Jetson Orin Nano, an ARM board with 8GB of shared VRAM, serves as the computing platform. An SSD is used for better performance, with the OS on a micro SD card to reduce default RAM usage.

- Sound Output: A Jabra Speak2 55 speaker is connected via USB to provide vocal feedback.

Cardboard Frame: A simple cardboard frame is used to point at objects for description.

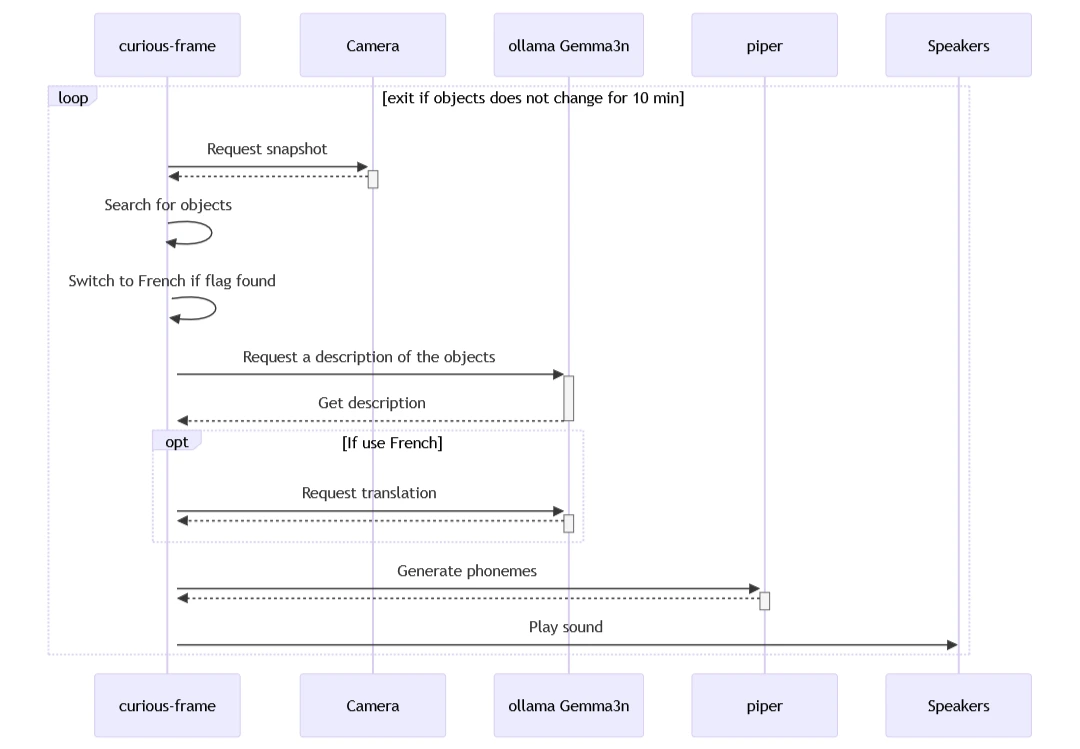

The workflow involves capturing an image, processing it using a Vision Language Model (VLM), generating a textual explanation, and converting that text to speech using a Text-To-Speech (TTS) system.

Image Capture and Processing Pipeline

Integration of Vision Language Model for Object Recognition

Text-To-Speech (TTS)

The text generated by the Gemma3n model is converted to speech using Piper, a Text-To-Speech system.

Challenges and Solutions

The project faced several challenges:

- Latency: Minimizing latency is crucial for a smooth user experience on edge devices with limited processing capabilities.

- Memory Limitations: Edge devices have limited memory capacity, requiring efficient data storage and processing approaches.

- Tooling: There were challenges in getting the tools stack working on the NVIDIA Jetson Orin Nano.

To address these challenges, the project tested a Mistral model (Ministral 3 3B), which allows for better integration of vision and language models. This improvement has led to more efficient processing and reduced latency as the image can be processed directly by the LLM and it is set to think in the appropriate language to avoid translation round trip.

Future Plans

The future plans for the Curious Frame project include:

- Integration with Reachy Mini: Plans are underway to integrate the system with Reachy mini, a robot that can provide a more interactive learning experience.

- Improvements in Text-To-Speech Technology: The current TTS system, Piper, has a known issue with dropping the first phonemes. The team is looking into alternatives to improve this aspect.

Conclusion

For more details, you can refer to the Gemma3n Kaggle hackathon article (here), the YouTube video - in French (here) - , and the code repository (here).